3DUU

3D Urban Understanding

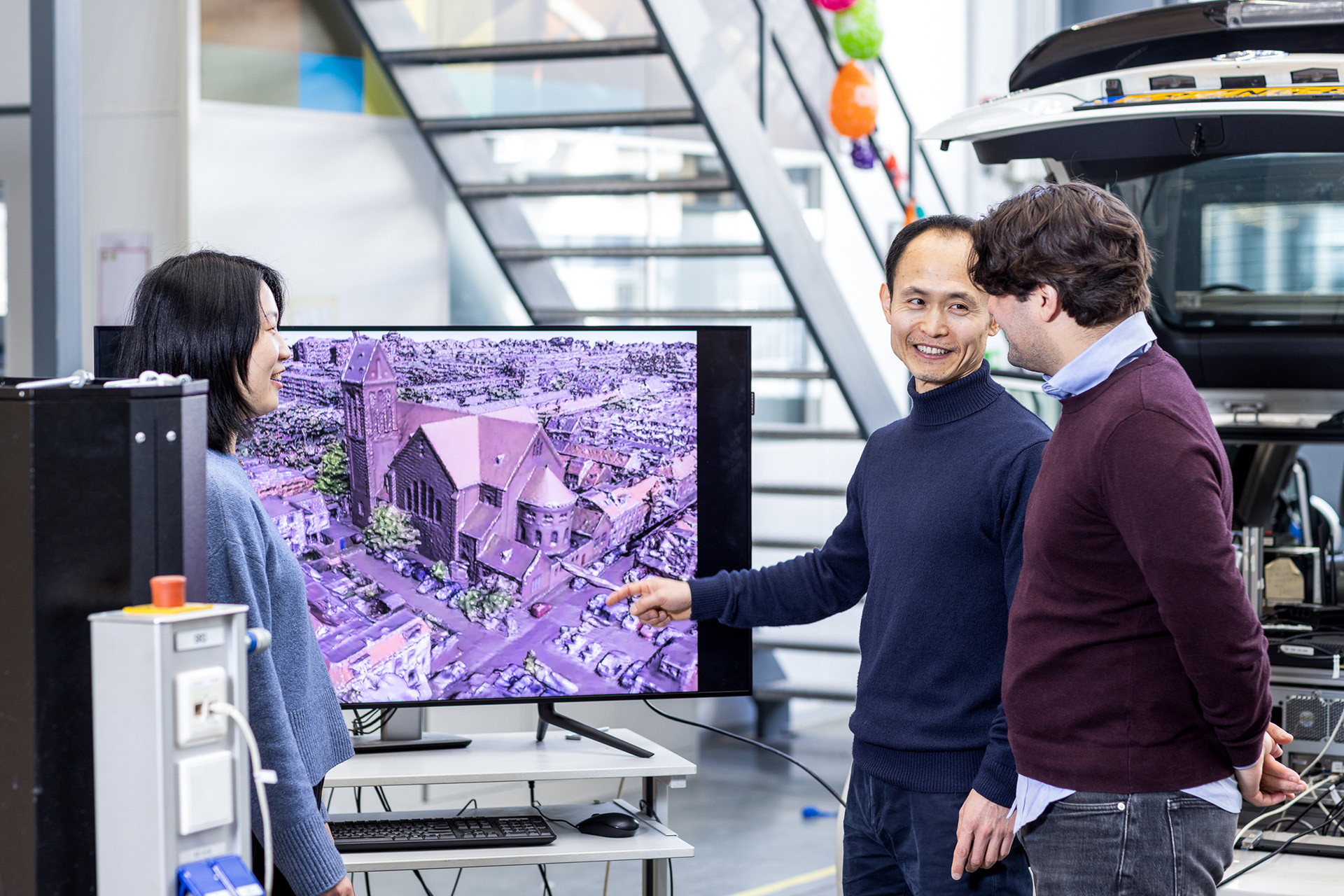

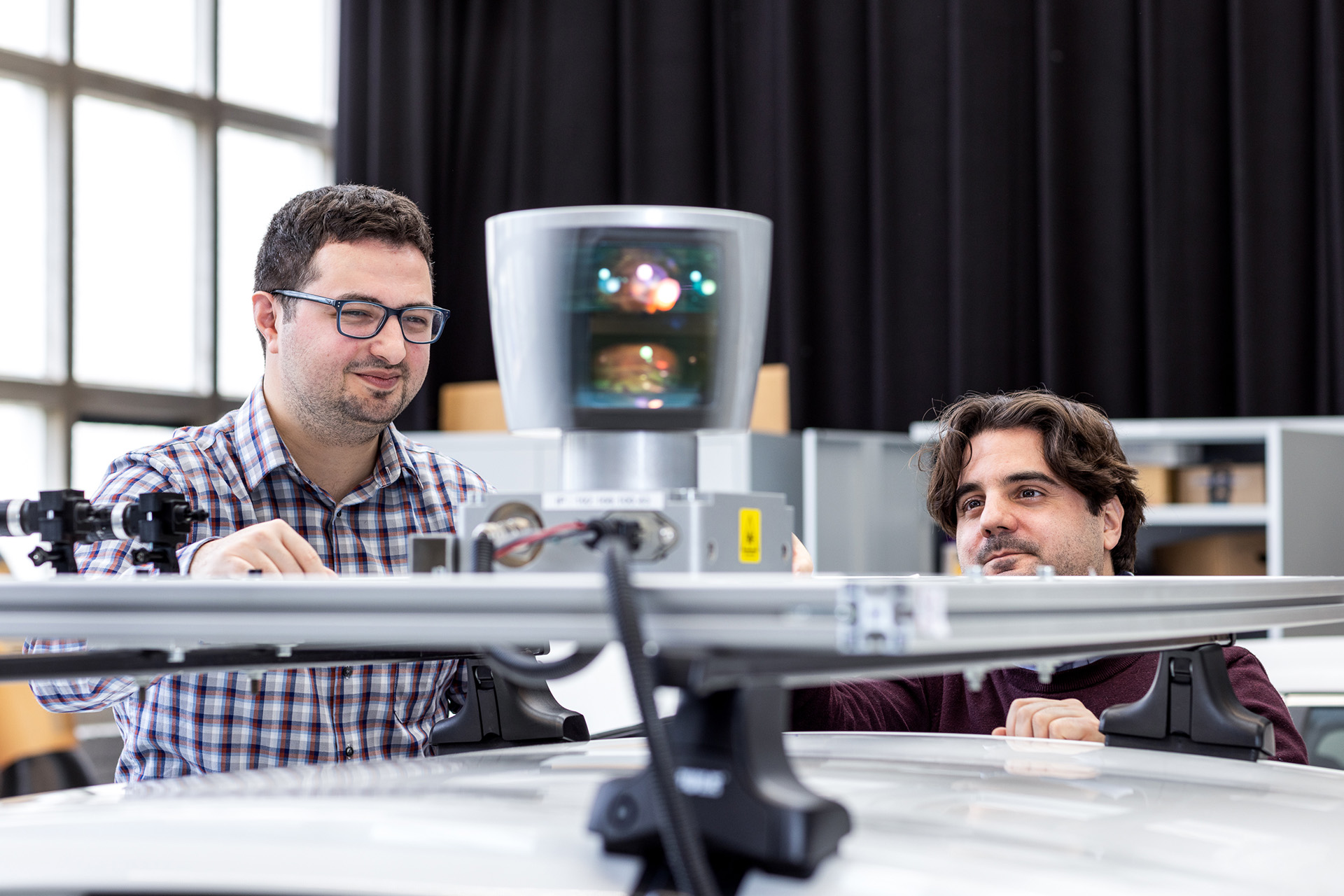

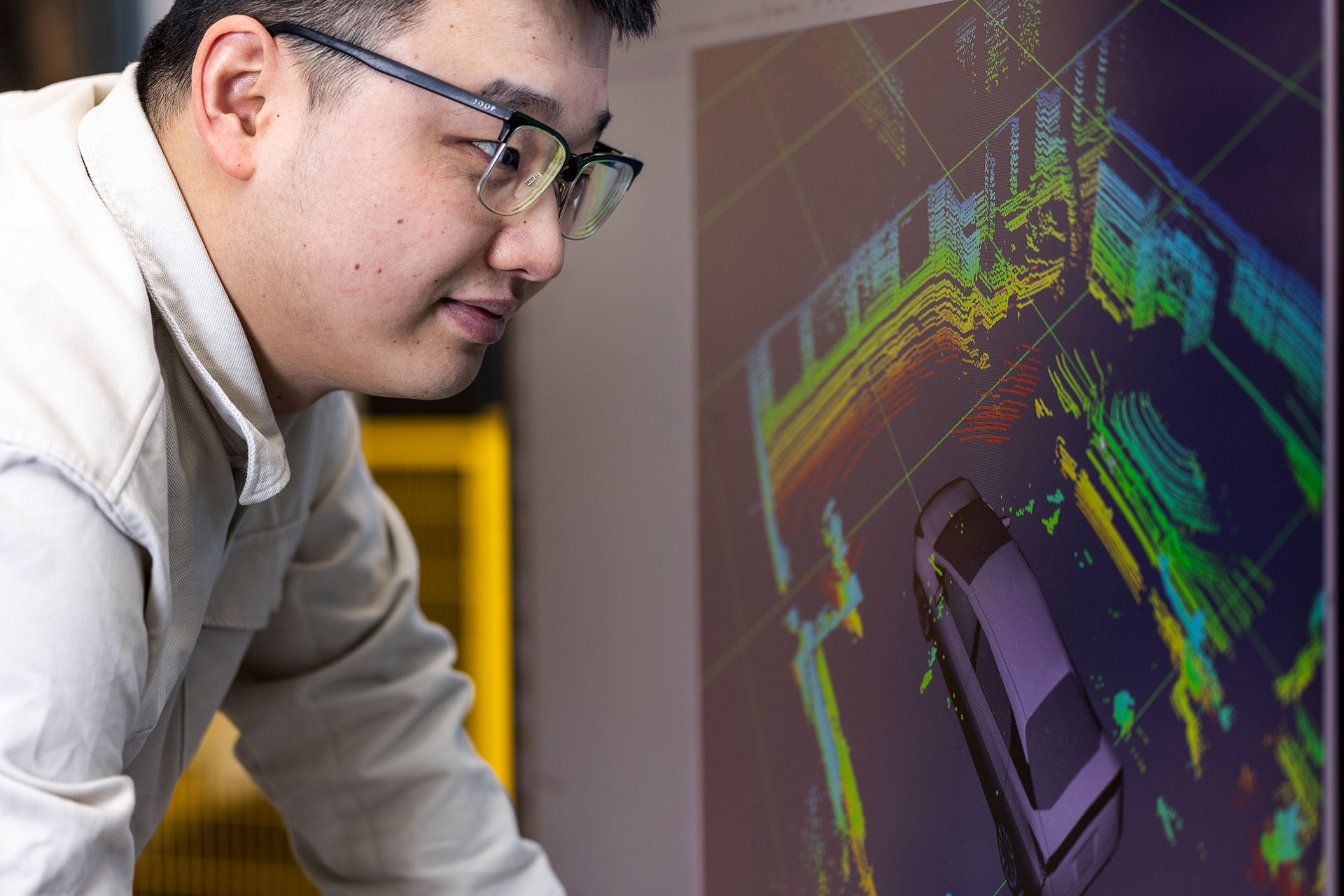

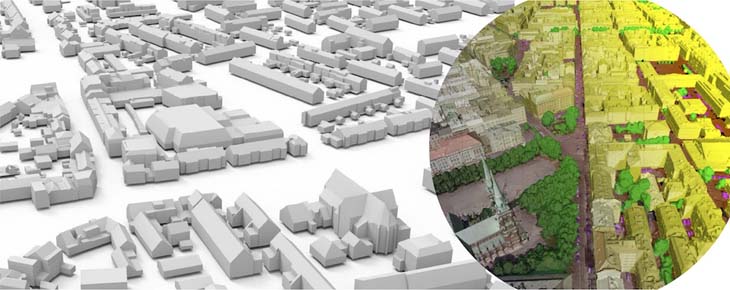

Through developments in 3D sensor technology, photogrammetry and computer vision, real-world urban scenes can now be captured at a large scale in the form of images or point clouds. This data could support powerful models for applications such as urban planning and self-driving vehicles. However, robustly and efficiently representing these large and dynamic outdoor scenes in a useable semantic 3D representation remains challenging.

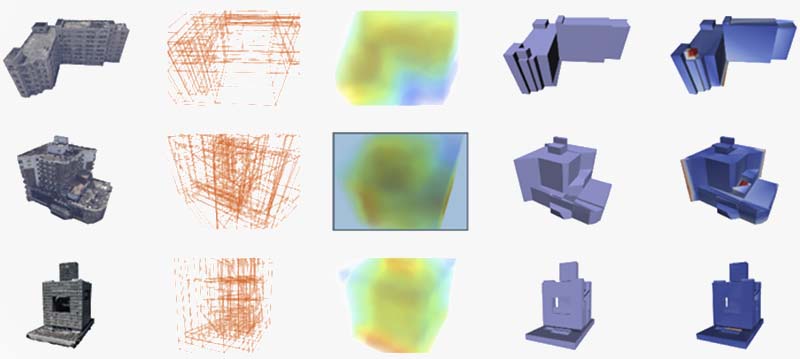

In the 3DUU Lab, we will develop new methods and techniques that automatically recognise and model objects in real-world scenes in 3D by combining data from various sources, such as aerial photos and laser scanners on vehicles. We investigate localizing 2D images in the 3D world, reconstructing 3D scenes from such images, and subsequently recognizing objects from 3D or even from multiple sensing modalities simultaneously. Our techniques can thus enrich the data with information about the location and types of objects and surfaces in the scene, such as buildings, streets, trees, traffic lights, and terrains.

The 3DUU Lab is part of the TU Delft AI Labs programme.

Education

Courses

Master Projects

Openings

Ongoing

- Wouter de Leeuw: Ensemble learning for Visual Place Recognition

- Linjun Wang: LoD3 reconstruction of Manhattan-world buildings

- Noortje van der Horst: Inverse procedural modelling of tree growth using multi-temporal point clouds

- Camilo Caceres Tocora: Automated Semantic Segmentation of Aerial Imagery using Synthetic Data