Synthetic data for a clean digital future

It’s often said that 'data is the new oil', and not without reason: our digital world is powered by data. All algorithms that allow or enhance internet interactions need data – just as our physical world often needs fuel. Digital ‘fuel’ comes in different forms, and tabular data is the most common and valuable. You can place tabular data in a table. Examples include your social ‘likes’, your credit card purchase history or even your entire medical history. Such tabular data can be very detailed, which is why it is extremely valuable. But a high level of detail is obviously also a problem: user privacy is threatened. Together with experienced co-founders Iman Alipour and Edwin Kooistra and supported by Delft Enterprise, Lydia Chen started BlueGen.ai to do something about that: they are developing synthetic data. And just like synthetic oil, synthetic data should make the world a lot cleaner.

Three birds with one stone

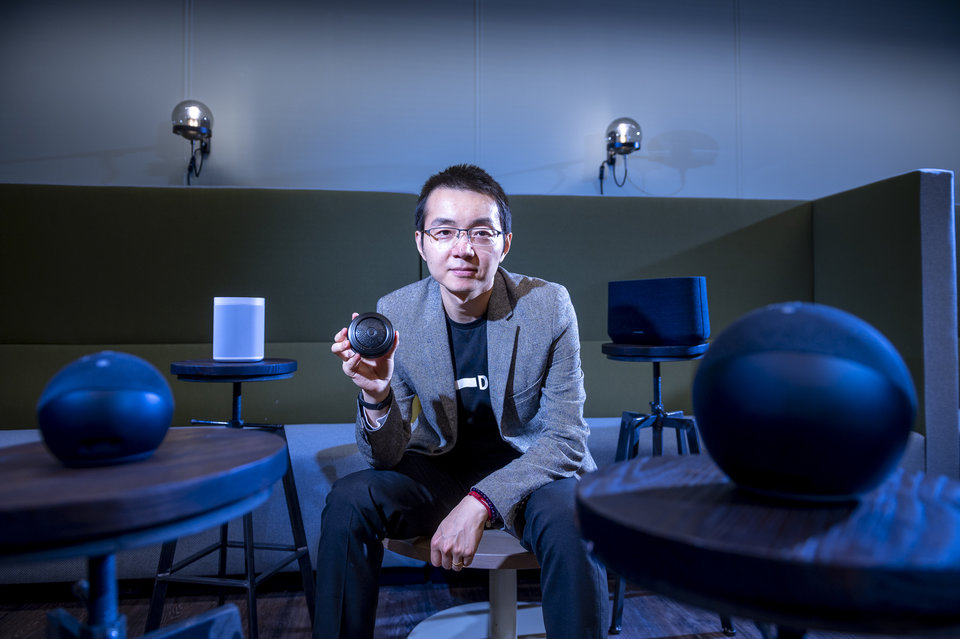

Chen likes to start a conversation about her research with a sobering reality check: “Big Data, in most cases, is nothing more than a buzzword. Most datasets are incredibly small, and although they’re potentially valuable, they are also hard to work with. Most of the time, no one knows what to do with the data. In fact, that can even be true when datasets are large enough.” Chen is concerned with the few exceptions: organisations that collect gigantic datasets and do not make them public or share them. The private data collected by social media on a large scale is an example, used to sell targeted advertising space. “Because I know very well what the power of good datasets and algorithms can be, I am quite wary of this data hunger. I try to avoid handing over my personal data as much as possible, although it's quite difficult sometimes.”

Although Chen is personally motivated to improve privacy, and is well aware of big data’s challenges and opportunities, big data itself was never the main focus of her academic research. It was only when she was approached spontaneously by an insurance company that all pieces of the puzzle suddenly fell into place. She still shines when she talks about it: “It just clicked! An insurer asked me if I could think of a way for them share data with their sister companies, without infringing on their customers' privacy. They briefly asked for the possibility of synthetic data, and I realised we could actually do this with our most recent algorithms.

“In fact, we could help not only this particular insurance company, but probably many other organisations, by reliably making ‘fake data’ sets that are statistically identical to original data sets. You can share fake data like that without any risks, because you are not sharing private data. It was a fantastic idea, which arose out of the work we were doing together as a whole team.”

The synthetic data delivered many benefits at once: users can effortlessly increase the size of datasets, they gain insights into patterns, and they can ask the right questions. Perhaps most importantly, data hunger no longer had to come at the expense of privacy. Investors soon saw the potential, leading Chen and her team to commercialise the initiative with their start-up BlueGen.ai.

Data hunger doesn't have to come at the expense of privacy anymore!

A responsible alternative

Chen's synthetic data is not the only initiative to handle datasets more responsibly. Since the introduction of GDPR, companies have used all sorts of methods. The most important are anonymisation, differential privacy and encryption. The latter solution is the simplest: you remove all data that can be directly related to one individual, such as first and last names. However, Chen said that the resulting dataset is anything but private: “If the rest of the data is specific enough, sensitive data can still be traced back to one specific person.” An example is hospital data: even without a name, you can recognise individual patients simply by looking at sensitive patient data. Differential privacy is therefore a better solution for privacy: it modifies slightly all data that is potentially sensitive or private. Unfortunately, the data set also gets a lot worse, and if you use differential privacy carelessly, sensitive data can still be recognised.

Encryption is another option: that should make data unreadable without a password – it would be no more than a gigantic series of random numbers. “The drawback is that big data companies are very clever. They always manage to gain insights into private data from encrypted data sets. It’s like putting something in a box. You can’t see what it is any more, but if you shake the box then you can still guess what's inside, and that ignores the chance that the box can sometimes be opened.”

Synthetic data has none of the disadvantages above. “Besides”, Chen quickly adds, “with synthetic data you can enlarge data sets at will. That is almost equally imporant. I am convinced that even in the near future, 20 to 30% of the data could be synthetic, comparable with the market for synthetic leather. You can identify fake leather if you look carefully, but if you have no other fabric available then it’s a great solution – just like synthetic data.

“I hope we’ll help a lot of young researchers who still have to use data sets that are much too small and very difficult to use. I know from experience that this can be a handicap to research. BlueGen.ai aims to let everyone increase the size of a dataset with ease, so that you can focus on what really matters: data analysis.”

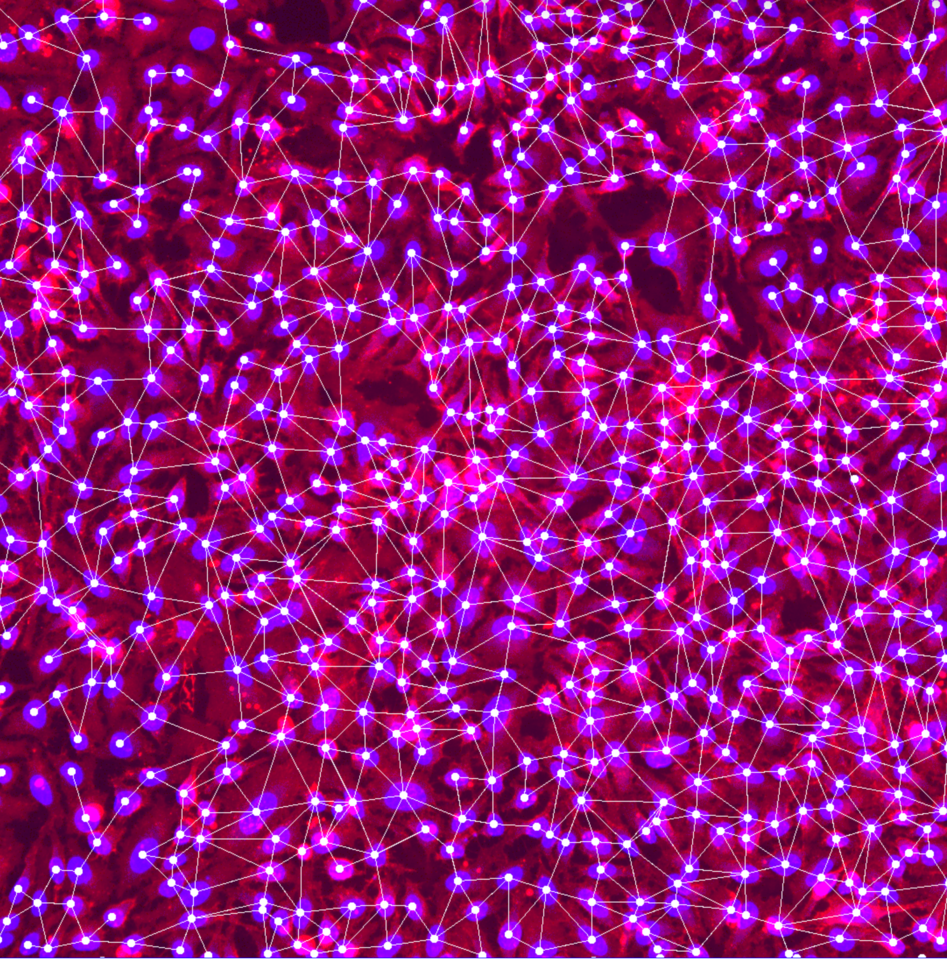

A computer that fools itself

But how do you create, or synthesise, data? It all starts with a 'generative adversarial network', a form of machine learning where you ask a program to fool itself. That programme consists of two parts: a generator and a discriminator. The generator tries to make data as real as possible. For example, a table listing the body weights of a group of people. At first, the table will be horribly off, suggesting lightweight individuals of a few kilos and people weighing a tonne. It is up to the discriminator to see through this, by comparing the fake data with real data. If the discriminator notices a big difference, the generator is set to work again, with instructions to do it better this time. The generator creates increasingly accurate data, until the discriminator can no longer distinguish it from the real data. At that point the GANs (short for 'generative adversarial network') has succeeded in making synthetic data.

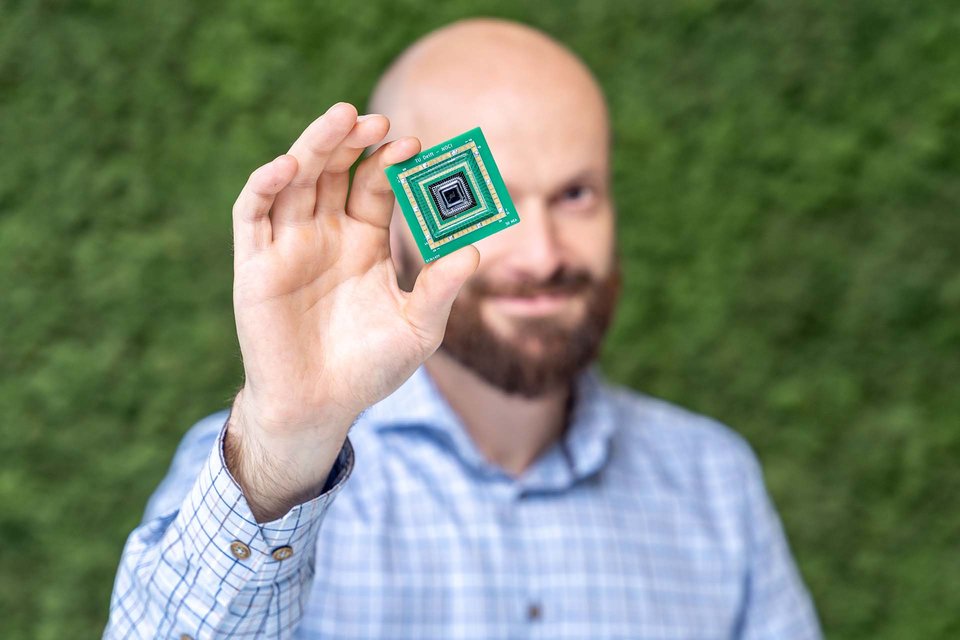

Chen: “Initially, we focused on improving GANs. For example, we used techniques that are already used for making visual data. Those programmes are already very advanced and can make extremely convincing fake photos. That’s why they are already widely used in fashion: new clothing designs are often made by GANs. The fashion designer then only has to tweak the most beautiful GAN designs”, Chen explains with a slight fascination.

The transformation of such 'tried and tested' GANs into programs that tabulate data came in the form of CTAB-GAN. This invention by Chen and her team is the most effective algorithm ever created for replicating tabular data. The first positive results were published in February 2021. Chen: “We're not quite there yet, and our next big milestone is dealing with the risk of biases. When you train a GAN, some biases are built in. To take the example of a hospital again, if you train a GAN with urban hospital data, it won’t reflect a rural hospital. If you rely on it blindly, you run the risk of ignoring major problems, and we want to eliminate this kind of risk.

Fake data, but statistically almost as good as real data.

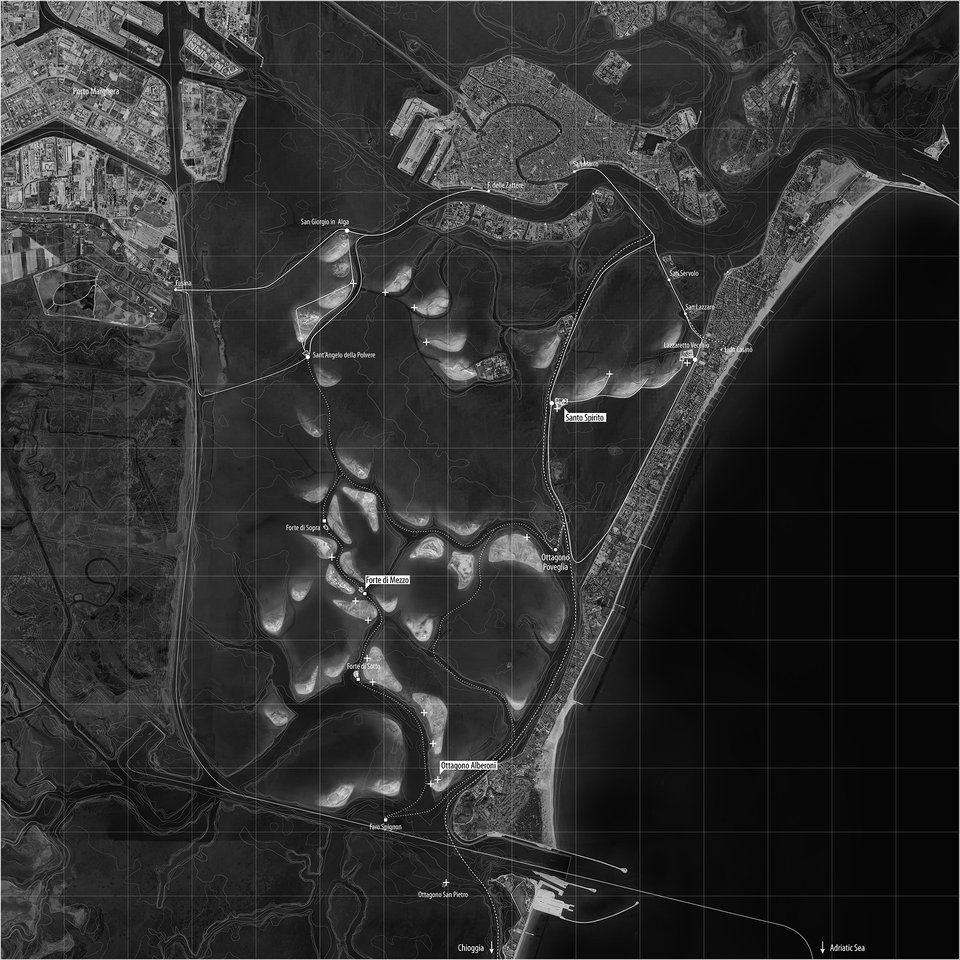

Hospitals are an appropriate example, because they are one of BlueGen.ai's first big markets. Chen: “We want to connect all hospitals. Currently all of them have incredibly valuable patient data, containing insights that could potentially save lives in other hospitals, but that data cannot leave the organisation.” Fortunately, there is a solution: a 'federated network' of GANs. Within such a network, hospitals can freely share their data insights with each other. Each hospital receives BlueGen.ai tools that can train their own GAN, on the condition that they return the GAN afterwards. “We act as a 'federator', collecting and combining all of the different GANs. By redistributing those unique GANs you are effectively sharing data, although in a roundabout way. It’s fake data, but statistically almost the same as real data, and therefore extremely valuable for research purposes.”

Such 'federated networks' can be applied to many more fields, from sharing data with partners to increasing datasets, Chen sees BlueGen.ai growing into the connecting factor in a community of data scientists – from hospitals all the way to bankers and insurers. She wants BlueGen.ai to grow into the leading authority in the field of synthetic data. “Who knows directly as a market leader,” she adds with a smile. “In any case, with our combination of technological and business experience, we have all the ingredients to make this a success. So we can develop important tools to help data scientists from all kinds of different corners. The big data companies are very well aware of our potential: they are keeping a close eye on us.”

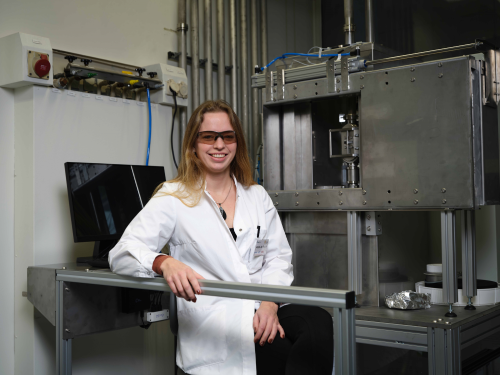

Lydia Chen

Visiting Address

Building 28

Room: 180 East 3rd floor

Van Mourik Broekmanweg 6

2628 XE Delft

Mailing Address

EEMCS, Distributed Systems

P.O. Box 5031, 2600 GA Delft

The Netherlands