Socially Perceptive Computing Lab

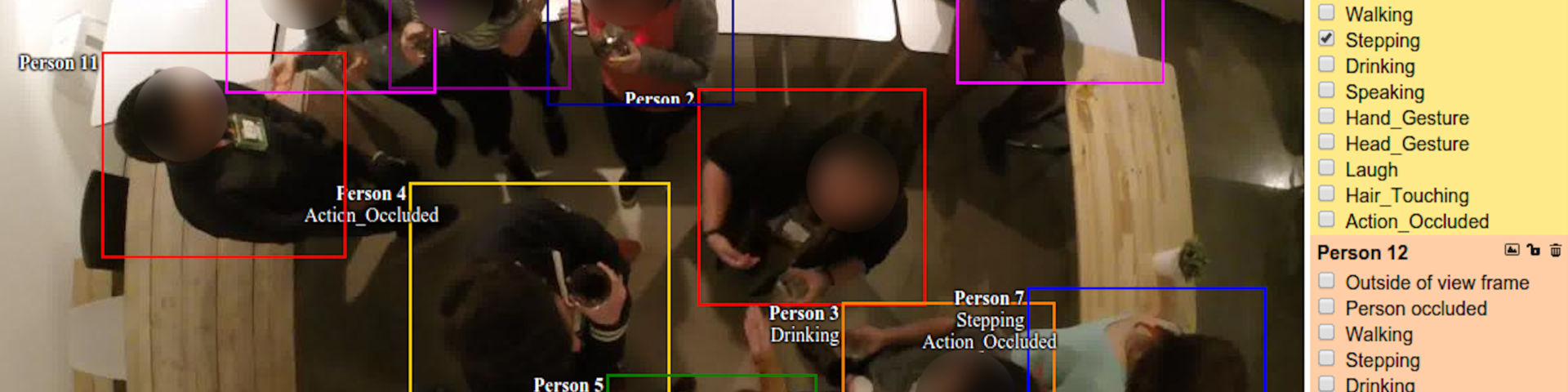

The Socially Perceptive Computing Lab investigates novel methods for the automated interpretation of social and affective behaviour. Research areas include social signal processing, multisensor fusion, computer vision, pattern recognition and machine learning, andubiquitous computing. Our expertise is mainly focused on developing automated methods to analyse social signals from non-verbal behaviour with multiple sensing modalities (e.g. wearables, video, audio , etc). We specialise in investigating the interplay between handling noisy sensor data embedded in the wild where non-conventional sensing modalitiesneed to be exploited.

The activities of our lab can be grouped into the following key research themes centred on developing automated methods for the perception of human behavior and experience:

- Individual: Social perception is heavily influenced by the need to understand the individualas a social entity.This involves the perception and possibly also predication of socially relevant behaviours (social actions) such as gesture or speech detection. It also involves the perception of personal experience such as mood or sentiment, and also intrinsic motivators for behaviour such as personality.

- Social: This involves the modelling of social coordination duringface to face interactions. This can happen at the level of pairs, groups, or crowds for measuring phenomena such as attraction, cohesion, or deception.

We have a track record in investigating the entire perception pipeline from data collection and labelling to signal processing, computer vision and pattern recognition when using both conventional and non-conventional sensing modalities to measure human experience in the wild. We regularly collaborate with other disciplines to ground our research in ecologically valid settings.