Colour 3D printing helps robot see its own fingers

With the rise of AI and advanced robotics, researchers and designers are looking for ways to make robots safer and more adaptive. Think of robots that closely interact with humans, like care robots. Or robots that handle fragile objects, such as grippers for fruits and vegetables of various sizes.

Now here’s the problem: those kind of interactions are very unpredictable, so the robots need to be able to sense the position of their own fingers and body. Researchers at the faculty of Industrial Design Engineering of Delft University of Technology have developed a way for robots to ‘see’ their own fingers using a combination of multicolour 3D printing and soft robotics.

Their research, ‘Color-based Proprioception of Soft Actuators Interacting with Objects’, was recently published in IEEE/ASME Transactions on Mechatronics.

Colour for self-sensing robots

Multicolour 3D printing is often used to make realistic looking (communication) models or even to reproduce paintings of old masters. IDE researcher Rob Scharff: “New research shows how the technique can also be used beyond aesthetic applications, by printing multi-colour patterns to fabricate self-sensing soft robots. Colour patterns inside the robot’s body translate deformation of the soft robot into a change of colour that can be picked up by miniaturised embedded colour sensors. Information on the shape of the soft robot can then be retrieved from this sensor data: the robot now ‘sees’ the object through the change in shape of its own ‘hand’ and can adjust accordingly.”

With the help of these colour patterns, the shape sensing accuracy is much higher than that of alternative sensing techniques. This research video shows how accurate shape sensing can be achieved, even when the soft actuator is interacting with unknown objects. Accurate self-sensing (proprioception) is considered a big step towards closed-loop control of soft robots.

Sensing in soft robots

Why do we need colour patterns, why can’t we just use traditional sensors in soft robots? Scharff: “Traditional robots typically have joints that revolve around a single axis. So a single encoder in each joint is enough to reconstruct the robot’s shape. In contrast, soft robots can bend, stretch and twist at the same time, making existing sensors unsuitable. Think about the trunk of an elephant for example. This can take on very complex shapes, which cannot be described in measurements of angles. For soft robots, we need sensors that can capture a large variety of deformations. Besides that, the sensors need to be flexible so as not to impede the actuators movement.”

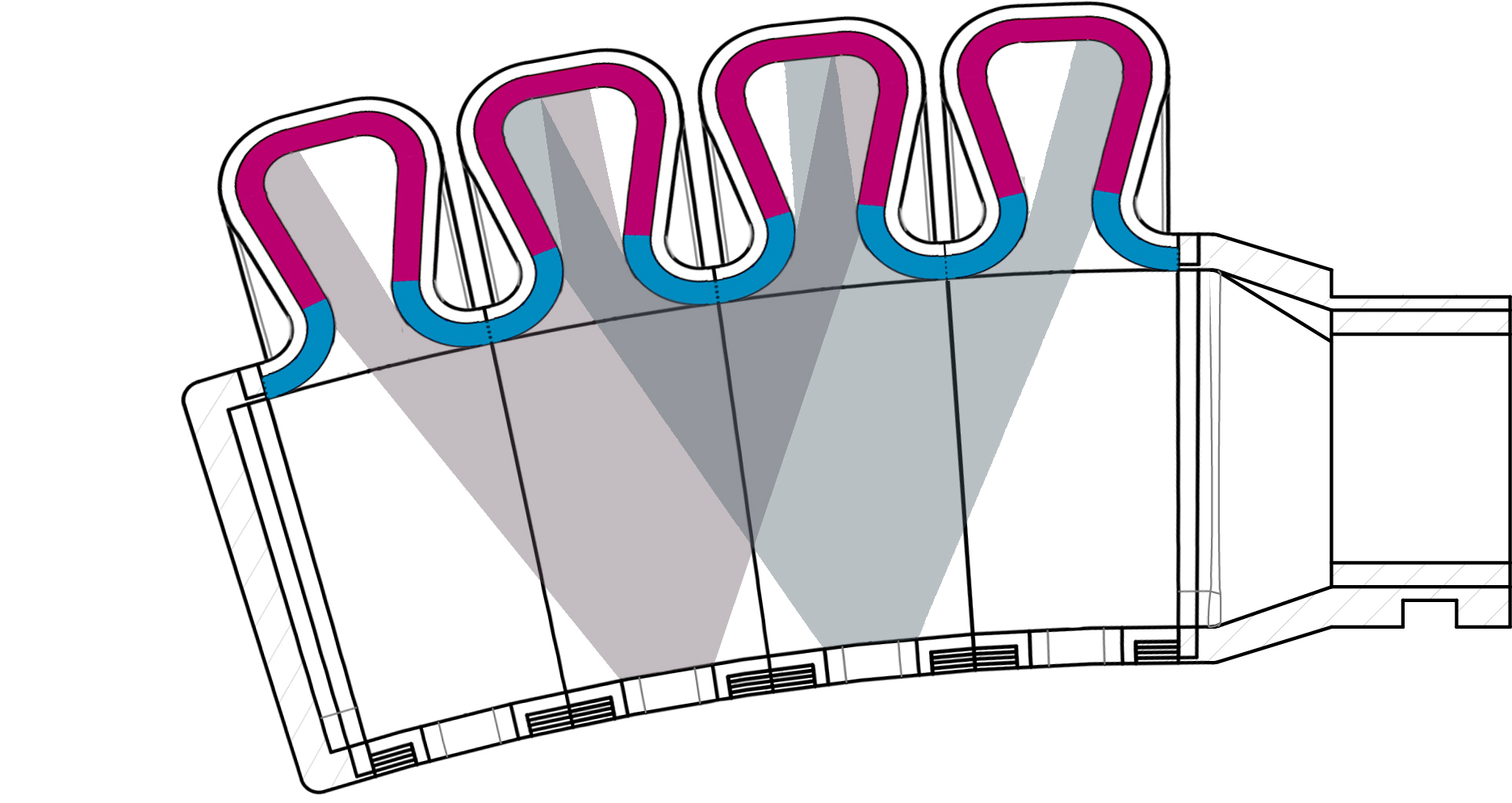

The bending actuators shown in this research consist of an air chamber with an inextensible layer at the bottom, and an extensible bellow-shape at the top. Inflating the air chamber will cause the bellows at the top to expand, while the bottom remains at the same length, creating a bending motion. “We 3D print a colour pattern inside these top bellows and observe these colour patterns with colour sensors at the inextensible bottom of the actuator”, says Scharff. “When the actuator is inflated, colours that were previously occluded from the sensors start to appear. We use this change in colour and the changes in light intensity to predict the shape of the actuator.”

The position of colour

The researchers calibrated the sensors using a feedforward neural network. To train the network, they collected 1000 samples of sensor values with corresponding actuator shapes. The actuator shapes were represented by 6 markers on the inextensible layer that were tracked by a camera. The inputs of the network are the readings from 4 colour sensors with 4 channels (red, green, blue, white) each.

Scharff: “Our method is able to predict the position of each of the markers within a margin of error that is typically between 0.025 and 0.075 mm. Our method also performs well on load cases that were not present in the training data. Unlike existing sensors in soft robotics, we are able to measure the exact shape of how the gripper is bent around an object. Therefore, we believe this work is a big step towards being able to accurately move and grasp objects with soft robots.”

Making it move

The actuators are 3D printed as a single piece using PolyJet multi-material. The bellows are fabricated using the flexible Agilus Black material, whereas the coloured structure is composed of alternating segments of VeroCyan and VeroMagenta. The custom Printed Circuit Board (PCB) sensors are embedded on small 3D printed plugs that are plugged into the bottom of the actuator to create an airtight fit. “Colour sensors are very cheap and accessible which makes it easy to implement the technique in other soft robots as well”, concludes Scharff.

The paper was authored by Rob Scharff (TU Delft), Rens Doornbusch (project MARCH), Zjenja Doubrovski (TU Delft), Jun Wu (TU Delft), Jo Geraedts (TU Delft) and Charlie Wang (The Chinese University of Hong Kong).

Jun Wu

- +31 (0)15 27 84858

- j.wu-1@tudelft.nl

- Personal webpage

-

Room 32-B-3-030

"Make things as simple as possible, but not simpler." - Albert Einstein

Zjenja Doubrovski

- +31 (0)15 278 63 67

- e.l.doubrovski@tudelft.nl

-

Room B-3-030